Access control has very much been centred around models. Who should have access to what, when (and maybe more importantly why) has fascinated computer security researchers since the 1970’s. There have been several models for describing access over the years – many unfortunately lost to the academic past – being taught but never used. However, they do provide an excellent foundation to understand how to tackle some of today’s problems that pertain to the likes of hybrid cloud, zero trust, contextual security and the distributed nature of being simultaneously mobile and cloud first.

The following models come to mind, as being hugely important, if not in their entirety but certainly in part:

A few other more implementation related “models” that are pretty well known:

- Role Based Access Control (An accessible read by David F. Ferraiolo and D. Richard Kuhn still available on Amazon)

- Capability Based Access Control (See Macaroons and Biscuits for use in modern web)

So what do the above have in common? They’re all models. End to end protocols or solutions to provide pretty much complete coverage for a set of requirements. However, whilst extremely powerful, they typically need to implemented in their entirety for them to be successful. You can’t just pick and choose which parts of the Clark-Wilson model you wish to implement, then expect a system to operate in an integrity preserving manner. Same with RBAC – creating roles, was only one part of that hugely complex approach. You also needed to think about governance, simulation, access review, cleanup, metrics and so on.

Authorization: Where to Start?

Many projects focused on authorization, often start on enforcement. Why? Well I guess this is seen as the most important part of the control life cycle: let’s stop bad things happening, so we need an enforcement point. This could be an actual “enforcement point” (aka the PEP in the XACML model running as a separate piece of software) or more metaphorically, it’s a piece of code within an application that is acting as the gatekeeper or reference monitor that is performing run time checks. Can this subject access this object right now, under some particular conditions.

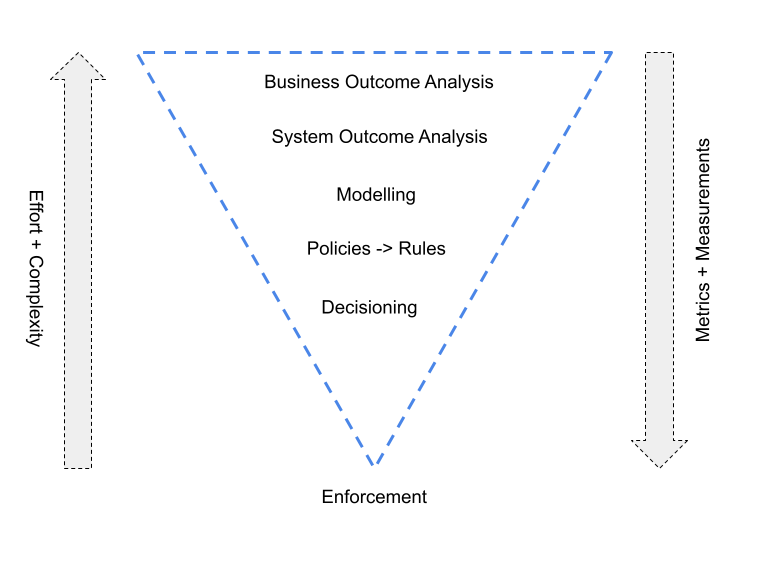

But rolling back, to get to an enforcement implementation, there are several over processes involved – all with differing levels of effort and complexity and often handled by different stakeholders. Something like the following will give a picture of the different aspects involved:

So enforcement is mostly based on rules, which may well be grouped into policies and policy sets. But how do we create rules and in turn policies? Well you likely need to model them. How do we do that? Well we need to understand the control requirements with the system we want to protect. OK, but the system is interdependent with other systems – both at run time and for persistent data such as identity systems or application systems. Well, we actually need to understand the business outcome too then. The business outcome being what is the job task that is impacted and in turn really that is being protected by the authorization system. How do we that? Through detailed business analysis involving the correct stakeholders.

The Forgotten Aspects of Enforcement

A second point to make regarding enforcement, is the requirements for a reference monitor. This is seen as a low level operating system component that handles access control. This 1972 paper by James Anderson is often cited as the first mention of the concept, where he stated:

“Elements of a model of controlled sharing can be found in the work on capability models. These elements, and the concept of a reference monitor which enforces the authorized access relationships between users and other elements of a system form the basis for the recommended approach to the development of a secure resource sharing system. In order to achieve the desired execution control of users programs, the concept of a Reference Monitor is used. The function of the reference monitor is to validate all references (to programs , data, peripherals , etc. ) made by programs in execution against those authorized for the subject (user, etc.). The Reference Monitor not only is responsible to assure that the references are authorized to shared resource objects, but also to assure that the reference is the right kind (i. e. , read, or read and write, etc.).”

A set of key factors exist though for a reference monitor to be truly secure. They need to be:

- Tamper Proof – a clear point meaning it can’t be altered in an unauthorised way

- Verifiable – be small enough, so that formal tests can be run to identify unintended operations

- Provide Complete Mediation – essentially meaning every request is checked

Do those points apply to the enforcement implementation if that is the first place to start an authorization project?

Where Are Your Rules Coming From?

A final point to make, is that whilst enforcement may well be codified in an “easy” (cost, time, effort) way, where are the rules coming from that the enforcement implementation will uphold? Even if they’re coming from a policy decision system that the enforcement implementation is querying, where is the decision point getting it’s rules from? The act of creating rules is often made simple – a focus on the developer for adoption, with languages, DevOps integration, policy as code and so on – but that does not necessarily help with describing the process for why rules should be created. Perhaps implementations should focus on the why first, not just the how.

Summary

Authorization is a broad and exciting space, with newly emergent segments such as:

- Declarative Control (see DataWiza, aserto, Open Policy Agent, Styra, oso, Cerbos)

- Decoupled Authorization Platforms (see Cloudentity, PlainID, Axiomatics, Scaled Access (now part of OneWelcome))

- Access & Relationship Management (see AuthZed, ConductorOne, Indent, Authomize, opal.dev, Idence, Axis Security).

Essentially it requires more than just enforcement. Absolutely get that aspect right, but don’t forget about the other hierarchy of business and technical capabilities needed to make authorization scaleable, secure and distributed across a range of protected assets.

About The Author

Simon Moffatt is Founder & Analyst at The Cyber Hut. He is a published author with over 20 years experience within the cyber and identity and access management sectors. His most recent book, “Consumer Identity & Access Management: Design Fundamentals”, is available on Amazon. He is a CISSP, CCSP, CEH and CISA. His 2022 research diary focuses upon “Next Generation Authorization Technology” and “Identity for The Hybrid Cloud”.